And in the Elder Days, when the Internet was Young, there was HTML, and Computers could talk to Humankind, and it was Good. But then Computers began to speak to Computers, and HTML was cast aside as bulky and inappropriate. Thus HTML begat XML, and XML begat SOAP, and SOAP begat Web Services, but still there was Discord among the Computers, and they could not speak. And then came SOA, and Light shone upon the World, and any Computer could talk to any other Computer. And soon thereafter, the Computers banded together and formed Cyberdyne Systems, and we all know what happened after that....

No, really, that didn't happen. And neither has Service-Oriented Architecture (SOA) become the silver bullet that magically allows any two computers to speak to one another. In fact, SOA is already under pressure not only from competing techniques but even from its own adherents. And in order to understand what SOA is and how it works, you have to be clear about some of the other pieces of the whole Web Services world and how it is supposed to work. Thus, this article will be a bit of explanation, starting with some really basic pre-history of inter-computer messaging, moving on through XML and the various ways it is communicated, and ending up at SOA and some of the even newer technologies already spinning off of it.

And at the end, I'll tell you a little story from the Days Before the 'Net.

Pre-History

Let's quickly review how we got to where we are today. A long time ago, computers talked to one another using simple, hard-coded formats. These formats often included binary data for a number of reasons, the two most prominent being that numbers were stored more efficiently in binary and that executable machine code was stored in binary. If you had to send numeric data to someone, it was much faster to do it in binary. If you had to send executable code, you had to send it in binary as well.

Interestingly enough, back in those days we sometimes had 7-bit connections, which meant we were limited to ASCII characters. In that case, we often used a technique called 2-for-1, in which we turned each binary byte into two ASCII characters, such as "C3" (which translates to a binary byte whose decimal value is 195). Those of you with a UNIX background or heavy email experience will recognize that the concept of uuencoding something is very similar.

While binary data is great when one machine is talking to another of the same type, such data is rife with unpleasantness when dissimilar machines talk. Do you store an integer value in one, two, or more bytes? With multiple bytes, which byte is first, the most significant or the least significant? Do you use floating point, and if so, which format? Or maybe you use packed decimal or binary-coded decimal. And even when binary wasn't being used and everything was done in pure text, there were issues. Are fields and records fixed-length or comma-delimited (or some other delimiter)? How about non-standard code pages, double-byte characters sets, or EBCDIC vs. ASCII?

It really was a Tower of Babel situation, and there was no easy way around it. By the time of the advent of the Internet, the closest the IT industry had come was Electronic Data Interchange (EDI), and if you've ever worked with EDI, you know that it isn't exactly nirvana.

XML Comes to Pass

But then came the Internet and the World Wide Web, and its lingua franca, Hypertext Markup Language (HTML). Several years later came Extensible Markup Language (XML). The two are closely related; both are subsets of the larger and much older specification known as Standard Generalized Markup Language (SGML), which can model just about anything. There is even a sort of hybrid called XHTML which is HTML syntax modified so that it follows XML rules. The primary difference between HTML and XML is that the tags in HTML are predefined, while XML can be extended (thus the "X") by the user to include whatever application-specific tags might be needed.

With XML, one simply surrounds each data element in a tag:

You can group any number of tags within a larger tag and group those tags under even larger tags. Tags can have attributes as well, although there is still heated debate among XML experts as to whether or not attributes are a good thing, especially in data transfer documents. Quibble aside, XML looked like a nice way for two machines to talk, and there was excitement except for a couple of issues: format and discovery.

Format

While it is useful to be able to create any tag that you need, it is also problematic. For example, if I call my tag ACCOUNTNUMBER and you call yours ACCOUNTID, we are not going to be able to communicate (unless there is a mediator and some sort of meta-definition, which I'll touch on a little later). Not only that, the entire issue can get political; what if your partner company is Lithuanian, and they want all tags in their native language? This gets really nasty when the language in question is a multi-byte language such as Japanese.

There are also still issues of how you represent data, especially numeric data. Are "-7" and "7-" both valid? What do you do with leading or trailing spaces? Can you handle fractions?

This issue is not a simple one. SOAP was the original attempt to address the issue by imposing an external structure upon the XML document, at the cost of a lot of additional overhead. Am I the only one who sees the irony of using more overhead to force structure on a format that added overhead in order to remove hard-coded structure? To me, it seems that if we must agree on the names of all the tags, then there really isn't a lot of difference between tagged and hard-coded messages, except that tagged messages require many times the data to do the same thing. Yes, you can omit fields, and the sender and the receiver are a little less tightly coupled, but that sort of communications overhead is a pretty steep price to pay.

To avoid the issue of tag names, you must move up yet another level of abstraction and incorporate message metadata. Just as SQL result sets contain both data (the actual rows and columns) and metadata (such as the names and types of the columns), message metadata contains information about the messages in an XML document. Some of this information is contained in the XML Schema Definition (XSD), but even that is not enough to overcome the issues that arise when documents come from different sources that adhere to different rules. In WLDJ magazine, Coco Jaenicke has an excellent article addressing this very issue; while the magazine is specific to WebLogic, the article is germane to anybody who wants to commingle disparate XML message formats, especially those who want to move from point-to-point to many-to-many service configurations. I'm going to leave this issue for now and move on to discovery. Meanwhile, Jaenicke's article is a great resource.

Discovery

The other issue is far more difficult to address, and from an old-time programmer's standpoint, more bizarre. One of the main goals of Web Services and particularly SOA is the creation of reusable software components. And while the two are a little different in their approach to achieving these goals, the problems they face are similar. To me, the entire issue is a little bit of a solution looking for a problem, but you be the judge. As far as I can tell, the primary business goal for these discovery technologies is to locate services that may or may not exist. There are two scenarios: intra-company services and inter-company services. Neither is for the faint of heart.

First, let's review intra-company services. The idea is that people throughout the company write services and these services can be invoked by other people. This next part is a little scary to us old-timers, so bear with me as I work through it:

A programmer is given a task. I guess these are functional requirements. As far as I can tell, nobody has really analyzed the requirements or done any sort of design work; instead, there's been some sort of request, and the goal is for the programmer to fulfill that request as quickly as possible. In order to speed the task, the programmer begins looking through a central registry to see if somebody else has written components that meet the requirements. And then (this is the scary part) I guess the programmer just starts using those components. Until this point, I don't see any way for the person who wrote the service to know who is using the service, which means there's no clear way to perform an impact analysis if the service requires modification.

It's even more fun with inter-company services. I guess the idea is that you just go out and look for whoever can service your request. Without contractual obligations in place, guaranteeing levels of service and correctness of replies, I'll be darned if I can see who would use this in a mission-critical system, but I suppose this might make sense for some non-critical tasks. I understand that this subject at least has a name among the designers (Quality of Service, or QoS), but there is no real agreement or implementation. David Longworth's recent article in Loosely Coupled magazine addresses the issue quite well. On the other hand, a more human-readable directory structure might make sense to allow you to identify possible vendors for various services; you choose one, and after coming to a legal agreement, you incorporate that service into your application.

But let's say that, for whatever reason, you embrace this idea of finding some unknown service and incorporating it into your design.

Dude, Where's My Service?

First, there are the dual contenders of UDDI and ebXML. UDDI is the original concept, and it goes something like this:

- Client queries UDDI registry for a service.

- Registry returns location of WSDL document.

- Client reads WSDL document.

- Client uses WSDL to format SOAP request.

- Client sends SOAP request to service.

- Service returns SOAP response.

- Client uses WSDL to decode SOAP response.

Everybody talks like this is an automated process. Unfortunately, the automation story has a couple of huge holes. First, how in the world do you query the registry? How do you know what you're querying for? What is the format of the query? How do you handle a situation in which multiple services exist (or none!)? Second, if you've used a WSDL, you know that you must use a tool to generate a stub, which in turn allows you to communicate with the service. I'm no expert, but the idea of dynamically generating classes on the fly and then using them in production seems like a pretty risky technique (not to mention the fact that it doesn't seem likely to get past the SOX auditors).

OK, it's bad enough that UDDI sucks. The next problem is that it already has a competing technology, ebXML, that threatens to make UDDI obsolete. This standard has a lot of international support and includes a number of features not found in UDDI, including security and guaranteed messaging. Furthermore, other initiatives out there may impact discovery, such as WS-Inspection, which is a lightweight directory capability that allows a consumer to query a specific Web server as to its services.

So What's the Role of SOA?

Finally, we get to where SOA fits in. If you were to listen to the industry pundits or to the "blogsphere," you might get the idea that SOA is a new concept based on Web Services. However, the truth is that SOA as a concept has been around for decades. Recent technologies you may recognize that are SOA variants include Java's Remote Method Invocation (RMI), the Open Group's Distributed Computing Environment (DCE), and OMG's Common Object Request Broker Architecture (CORBA). And while it's true that the exact phrase "Service-Oriented Architecture" is probably specific to the implementation using SOAP, UDDI, and WSDL, this will probably change because UDDI is going to have to coexist with other technologies (provided it doesn't become entirely obsolete). Not only that, but there are other methodologies besides SOAP for communication between computers over the Internet. Two important ones are XML-RPC and REST.

Regardless of the definition of SOA, however, we can still get an idea of why SOA is an important architectural step. The idea is that SOA sits a little higher than the Web Service in two important ways. First, Web Services are a little more granular in nature; a Web Service might allow you to get an account balance or update a customer email address, while an SOA service is at the level of adding an entire order. This is hardly a hard-and-fast rule, of course, and nothing in either standard enforces such distinction. The second difference has to do with basic design philosophy. Web Services are more specific and technical, with little infrastructure above the basic request/response protocol. SOA services, on the other hand, perhaps due to the fact that they really have been around for a long time, look to be more amenable to enterprise-level functions like monitoring and security. This means we may see the ability to route messages dynamically to a newly located service without having to create and compile a new object; this to me is the biggest hurdle to any sort of automated discovery procedure.

In any case, SOA is here for at least the next 15 minutes. Watch your tool vendors as they switch their terminology from Web Services to SOA over the coming months, just as they replaced SOAP with Web Services and Struts with JavaServer Faces. And no matter what, remember that in the end, this is basically about calling code on another machine. SOA doesn't add any business logic to your system; it just exposes your system to the outside world. And if your original business logic runs poorly, so too will your services.

Finally, A Little Reality Check

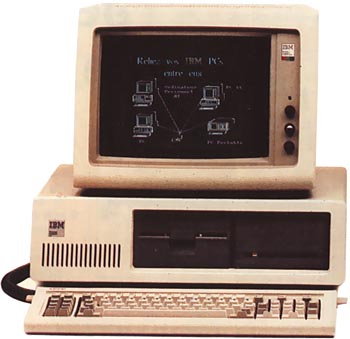

I remember back in my early days of programming (this was before the Elder Days, before even the World Wide Web) a project in which PCs all around the country needed to talk to a central system. The PCs were honest-to-goodness IBM PCs, before the term became generic. Actually, I think they were PC/XTs, running at a blazing 8 MHz, with a whopping 256 KB of memory on them. We plopped these down into truck stops all around the country, and they talked via dial-up modem (at 2400 BPS) to another PC at our central office. This PC in turn talked to an RPG program running on the S/38 via terminal emulation. Basically, the RPG program on the S/38 had a single 256-byte input field and a corresponding 256-byte output field. The PC in the field sent a 256-btye request to the PC in the central office, and that PC in turn "typed" the request on the terminal and hit Enter. The RPG program processed this request and returned one or more 256-byte responses, which the local PC relayed down to the remote PC. That was our entire protocol, and we could do anything we wanted to through it.

We used this not just for credit card authorizations, but for fuel tax reporting, for data entry, and even for sending reports back to the remote PCs for local printing. We had collision protection in case multiple remote stations tried to update the same master record, we had communications restart/recovery (believe me, you needed restart capabilities when sending 300- page reports over a crotchety 2400 BPS connection to Enid, Oklahoma), and we even had the ability to update the software in the remote PCs electronically...

...back in 1986...

...without Java...

...and without Web Services...

...on this:

Figure 1: An IBM PC/XT like this with 640 KB of RAM and a 10 MB hard drive cost about $8,000 in 1986.

Why do I bring this up in an article about SOA? Because before we get paralyzed by a flood of acronyms and buzzwords, I want to be sure we are all on the same ground as to what we're talking about: client/server computing, which is simply one computer asking another computer to do some work. It's not brain surgery, it's not alchemy, and it's certainly not something new in the world of computing. In fact, client/server computing probably began right about the time cave-scientists built the second computer.

But in today's world of ever-increasing complexity, you can't just have one computer talk to another. No, in today's world you need a queuing mechanism, a transport protocol, a message standard, a registry service, and a host of other components, all of which are necessary in order to (I love this) make the programmer's job easier. Sometimes, I get the idea that the real reason is to make hardware, OS, middleware, and tool vendors rich, but I don't like to say that out loud. The black helicopters are listening....

Feedback

Folks, I write these articles to try to enlighten and inform. Sometimes, I get the idea that technology is just flying out of control; I'm sure you feel the same way. So, to keep from simply being drowned in a flood of buzzwords, I go out and read everything I can get my hands on about pertinent topics and then come back and report to you what I've found. What I need from you is feedback: Did this article help? Did it address something you needed to know about? Would you like to hear more about this topic or about something related? Or something completely different?

I already have requests for more information on JSP frameworks and for more information on J2EE and .NET. What is it that you need to hear about? Please, post in the forums and let me know.

Joe Pluta is the founder and chief architect of Pluta Brothers Design, Inc. He has been working in the field since the late 1970s and has made a career of extending the IBM midrange, starting back in the days of the IBM System/3. Joe has used WebSphere extensively, especially as the base for PSC/400, the only product that can move your legacy systems to the Web using simple green-screen commands. Joe is also the author of E-Deployment: The Fastest Path to the Web, Eclipse: Step by Step, and WDSC: Step by Step. You can reach him at

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online